1.58-bit FLUX: The Future of Efficient Text-to-Image AI

Introduction

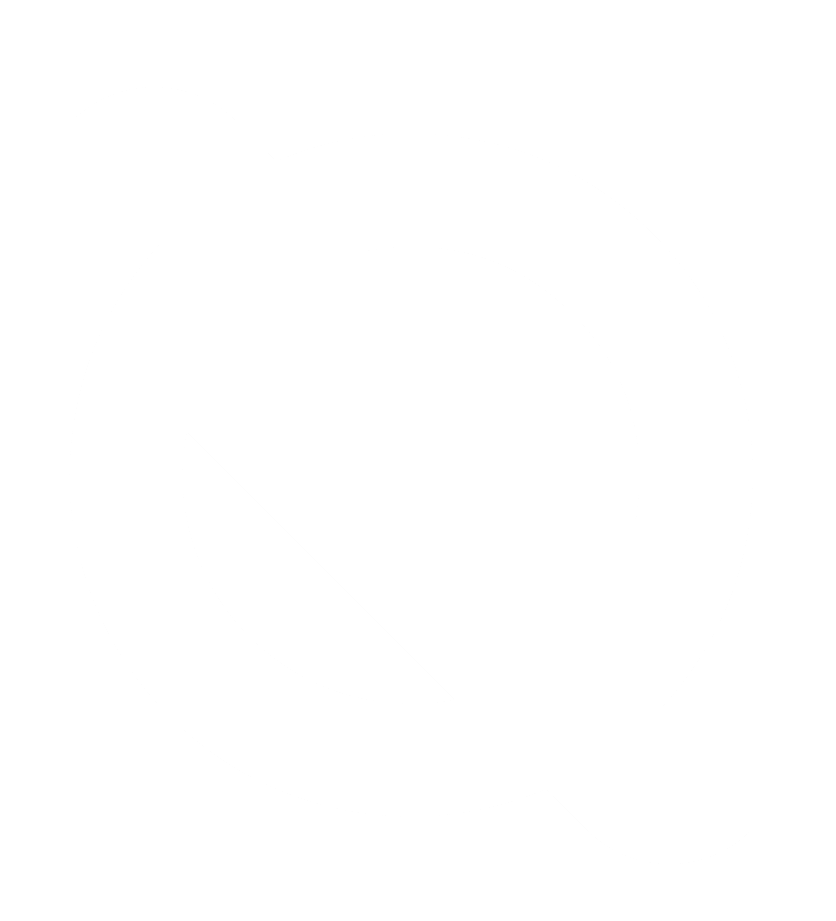

The demand for AI-generated visuals is skyrocketing, powering everything from digital art to advertising and gaming. However, text-to-image (T2I) models often require massive computational resources, limiting their real-world deployment. At Quambase, we push AI boundaries by integrating cutting-edge efficiency techniques and quantum-inspired advancements. One such revolutionary breakthrough is 1.58-bit FLUX, a new quantization method that shrinks model size, slashes memory usage, and boosts inference speeds—all while maintaining high image quality.

What is 1.58-bit FLUX?

1.58-bit FLUX is a game-changing quantization technique applied to the FLUX.1-dev text-to-image model. By reducing weights to just three possible values (-1, 0, +1), it drastically optimizes efficiency:

- 7.7× reduction in model storage 📦

- 5.1× reduction in inference memory usage 🔋

- 13.2% faster inference speeds ⚡

Unlike traditional methods, 1.58-bit FLUX requires no additional image data for fine-tuning, relying instead on self-supervision from FLUX.1-dev. This simplifies quantization and enhances adaptability.

Why Does 1.58-bit FLUX Matter?

With AI-generated art platforms like Midjourney, DALL·E, and Stable Diffusion becoming mainstream, efficiency is key. 1.58-bit FLUX enables faster, more accessible, and cost-effective AI-powered creativity in:

- Content creation & digital art 🎨

- Mobile AI applications 📱

- Augmented reality (AR) & virtual reality (VR) 🕶️

- AI-assisted graphic design 🖌️

Key Benefits of 1.58-bit FLUX

🚀 Supercharged AI Efficiency

- Compression Breakthrough: Reduces model size by 7.7×, making it ideal for mobile and embedded AI.

- Memory Optimization: Decreases inference memory footprint by 5.1×, improving performance on standard GPUs.

- Lightning-Fast Inference: The custom 1.58-bit kernel accelerates computations, delivering 13.2% faster speeds on L20 GPUs.

🎨 Image Quality Without Compromise

Despite extreme quantization, 1.58-bit FLUX maintains near-identical generation quality to the original FLUX model. Evaluations on GenEval & T2I CompBench prove its effectiveness (Figures 3 & 4 showcase side-by-side image comparisons).

🛠 Optimized for Real-World Deployment

A custom kernel tailored for 1.58-bit operations ensures computational efficiency, bridging the gap between performance and practicality.

Challenges & Future Directions

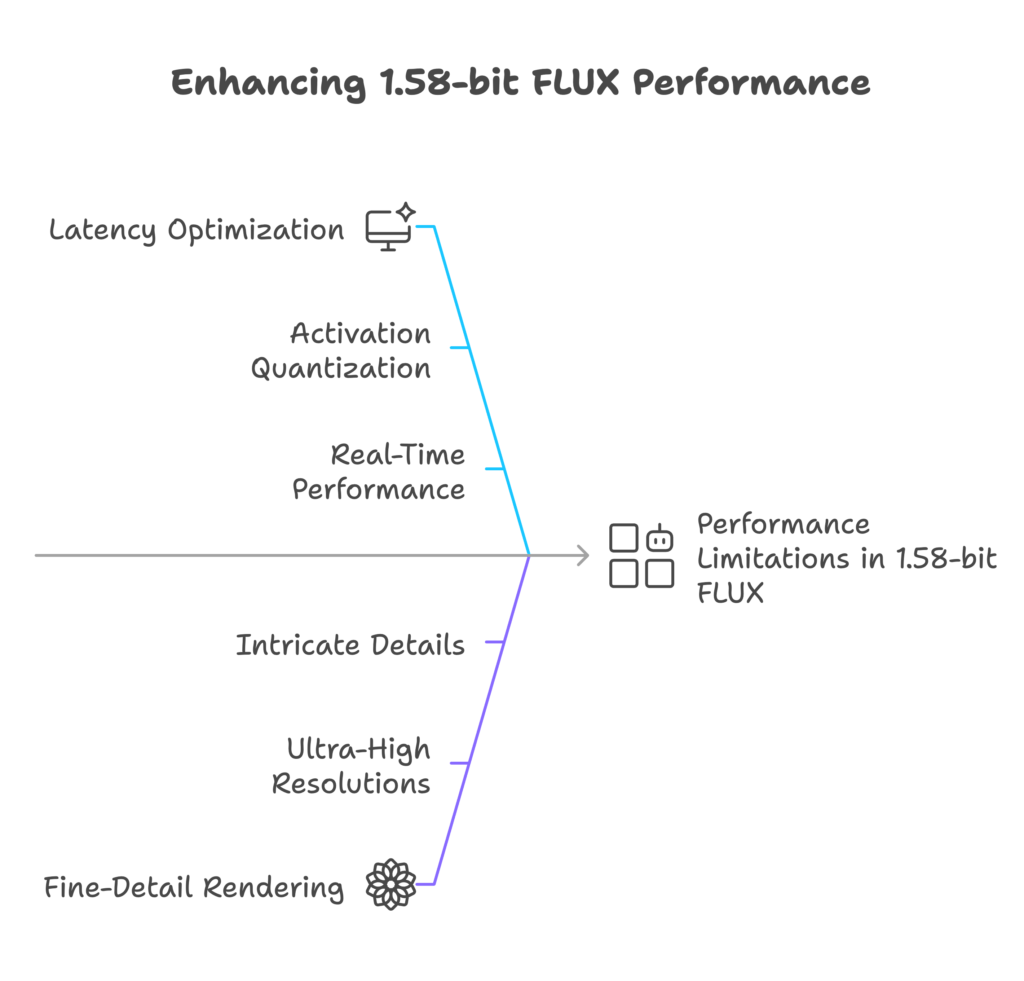

While 1.58-bit FLUX is a breakthrough, some areas need improvement:

- Latency Optimization: Further enhancements, like activation quantization, could improve real-time performance.

- Fine-Detail Rendering: At ultra-high resolutions, full-precision FLUX has a slight edge in intricate details.

Future research will focus on activation-aware quantization, advanced kernel optimizations, and higher-resolution fidelity.

Quambase: Powering AI & Quantum Innovation

At Quambase, we specialize in AI efficiency, quantum computing, and next-gen model development. Our mission is to push the limits of AI performance while ensuring practical deployment. 1.58-bit FLUX is a prime example of our commitment to scalable AI solutions.

Conclusion: The New Standard for AI Efficiency

1.58-bit FLUX proves that extreme low-bit quantization can retain top-tier image quality while cutting computational costs. This breakthrough revolutionizes T2I models, making AI-generated visuals faster, lighter, and more accessible than ever before.